MORE opinion

Performative Parsimony

June 5th, 2026

Hipkins’ Policy Silence Doesn’t Matter

June 3rd, 2026

Lord Cooke’s Indictment

June 2nd, 2026

Sherman Tanks...

May 28th, 2026

A Picture Paints My Thousand Words

May 14th, 2026

Legal Elite Is Winning In The War For Constitutional Supremacy

May 6th, 2026

BSA Bs Continues Unabated

April 28th, 2026

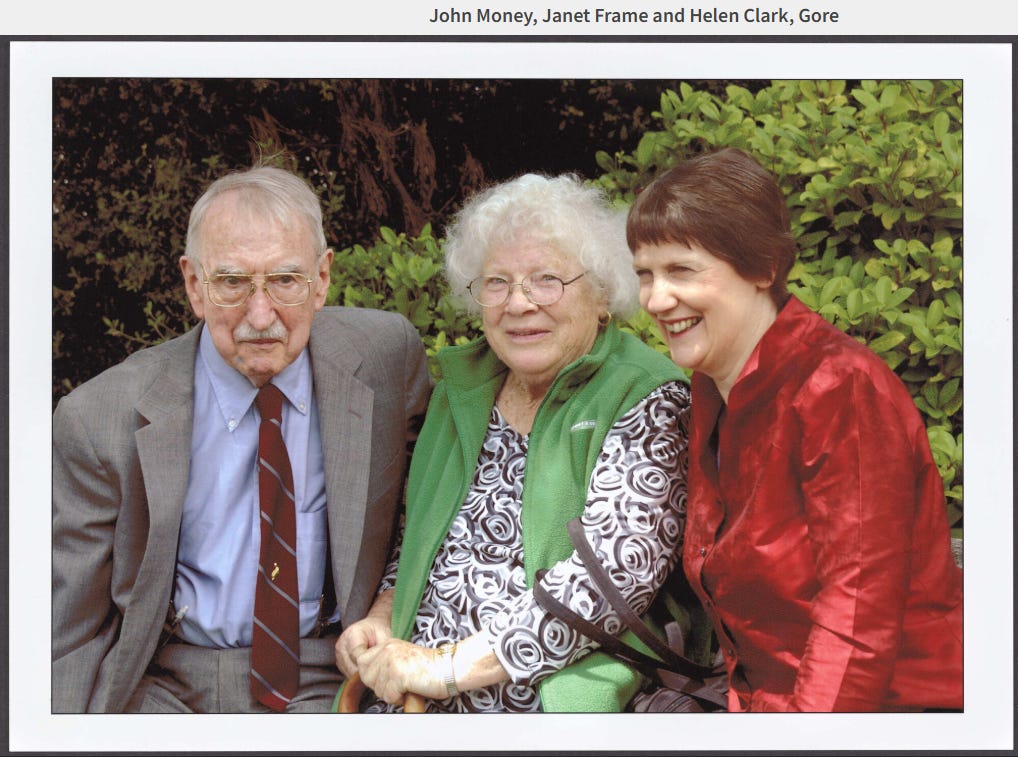

Clark Foul Play

April 13th, 2026

More Mumbo Jumbo From The BSA

April 1st, 2026

NZ First's Plan For Power Reform

March 25th, 2026

Your Opinion Matters

Open.

Tolerant.

Free.

NCEA Literacy and Numeracy OpEd from NZ Initiative

Dr Michael Johnston: We need an urgent review of the way in which literacy and numeracy are taught in primary schools.

Missed out on this content?

No worries. Join Platform Plus to get unlimited access to all The Platform content.

For just $30 per month you won't have to miss out again!

JOIN NOW